Kinesis streams can pass multiple available messages in one AWS Lambda function invocation, allowing for further optimization. Kinesis streams let you specify how many AWS Lambda functions can be run in parallel (one per partition), which can be coordinated with your DynamoDB write capacity.

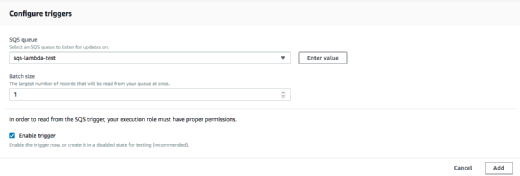

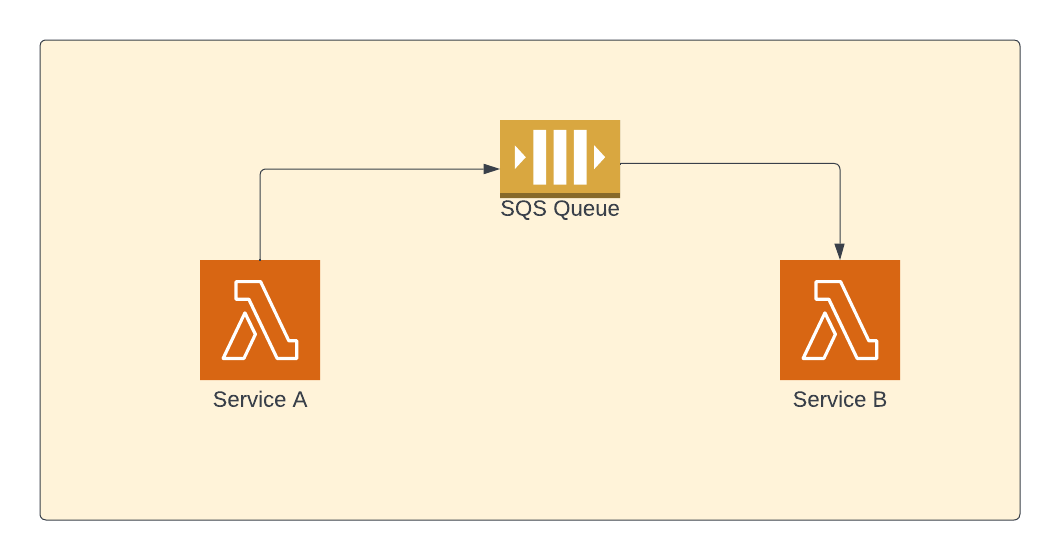

Kinesis streams guarantee ordering of the delivered messages for any given key (nice for ordered database operations). Kinesis streams can drive AWS Lambda functions. The way we take a flood of incoming requests and feed them to AWS Lambda functions for writing in a paced manner to DynamoDB is to replace SQS in the proposed architecture with Amazon Kinesis streams. Anyway, going to use Kinesis as suggested below UPD: If anyone is interested, I have found how to make aws-sdk skip automatic retries in case of throttling: there is a special parameter maxRetries. I would even prefer to lose some records instead of increasing the lag between a record being received and stored in DynamoDB Returns Unprocessed items instead of automatic retry in case of throttling). Writing multiple records with one DynamoDB's BatchWriteItem call (which So I would like to temporarily store data in the queue, send response "200 OK" back to client, and then get queue processed by separate function, Not even throttling itself but the way it is handled while writing data to DynamoDB with AWS SDK: when writing records one by one and getting them throttled, AWS SDK silently retries writing, resulting in increasing of the request processing time from the http client's point of view. The problem that I am trying to address with SQS is DynamoDB throttling. That would be great for me to avoid using SQS at all. Why don't I want to write directly into DynamoDB, without SQS? It should be called frequently, multiple times per second (or at least once per second), because I need all the data from the queue to get into DynamoDB ASAP (that's why calling lambda function B via scheduled events as described here is not a option) The problem is that I can't figure out how to trigger the second lambda function. Reads up to 10 items (on some periodical basis) and writes them to DynamoDB with BatchWriteItem. Then the other function, B, processes the queue: Is placed into SQS queue by my lambda function A. So it should work as shown: data coming from many http requests (up to 500 per second, for example) Http requests -> (Gateway API + lambda A) -> SQS -> (lambda B Here is the simplified scheme I am trying to make work:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed